-

-

-

-

URL copied!

In essence, indigenization makes something more native – transforming services or ideas to suit local culture, for example. The defense and sociology domains commonly use indigenization in their reports and studies. The ideology also fits in the cloud age within the information technology sector, where specific cloud platform vendor capabilities symbolize a local culture.

Cloud adoption is a common practice for those who have decided to embark on a digitization journey. Those running enterprise-grade software in physical data centers can opt for specific types of transformation or seek recommendations from cloud enablement experts on the appropriate kinds of cloud indigenization.

For example, some enterprise customers may need a lift and shift cloud migration based on legacy architectures. Others may want to leverage cloud-agnostic capabilities and platform rearchitecting and potentially consider retiring the applications built with age-old technology stacks. Finally, some companies (particularly those ahead of the game) may state that their application uses cloud-native technologies and now require guidance to optimize the enriched cloud capabilities.

Often, when companies seek cloud indigenization expertise, the information they provide is brief and unclear. There may be limited time for enterprise team stakeholders to understand all variables in optimizing the cloud. Therefore, the appointed team’s main task becomes explaining the overall costs so the stakeholders can approve them.

This article is for cloud technical leaders, practitioners, and architects to provide the tools they need to explain the different variables involved in optimizing the cloud, such as the time constraints and access to the ecosystem. The scenario discussed here ensures the application is ‘cloud-ready,’ which serves the customers from an on-premise center that will migrate to a specific cloud environment.

How far is cloud-ready from cloud-native?

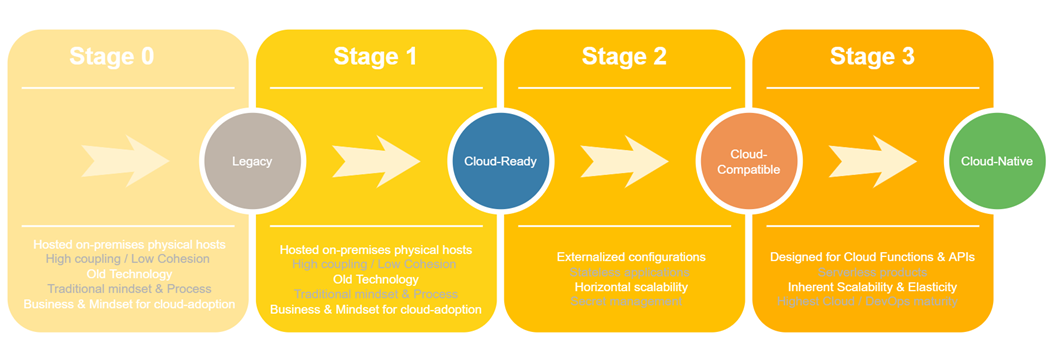

Terms related to the cloud tend to lack standard definitions and are often inconsistent between technical stakeholders and business professionals. Let us first see the definitions of the cloud terms to be aligned on same page for the intent of this document.

“Cloud-ready” is used by business and technology experts. On the business front, its meaning describes a big-picture plan of an enterprise aspiring to modernize its application portfolio in all aspects. In addition, it means the readiness to adopt cloud principles, embrace the agile culture, and develop people skills to transform the applications based on cloud environments in all their true capabilities.

On the technology side, cloud-ready describes the state of on-premises hosted legacy applications rearchitected just enough to run on cloud-based infrastructure in the future. They can either be transferred into the cloud as-is or modified into microservices, then become a containerized architecture that continues to run in on-premise environment infrastructure.

To align them to cloud vendor services and capabilities, creators can then revamp the applications using the cloud-native principles, technologies, and practices to lead their deployment and operations. However, such cloud-ready tagged applications cannot take advantage of the full benefits of being in the cloud. Those benefits include elastic scaling, running parallel instances, and increased resilience, as these applications still meet user demands with traditional capacity planning exercises. However, the creators can add these benefits later.

From a cloud maturity perspective, organizations can start modernizing their legacy applications by getting cloud-ready through containerization. The next step is to graduate cloud-ready applications to become cloud compatible and then optimize architecturally to be hosted on the cloud platforms to leverage the native features gradually.

“Cloud-native” is a term used to describe applications designed for specific cloud-based platforms. The official definition from CNCF states that creators can develop cloud-native applications as decoupled microservices running in containers managed by self-hosted platforms (including on-premises) or cloud platforms. Then the creators can extend it to a serverless architecture wherein the microservices run as serverless functions mostly behind an API gateway service.

Therefore, selecting the correct type of cloud service among various products for a specific workload or workflow is a detailed process. This includes leveraging cloud service providers’ proprietary technologies and APIs to access cloud security, cloud storage, backup solutions, disaster recovery, cloud testing, and more. Cloud service vendors can also provide inherent cloud optimization to all their clients. Being cloud-native can overlap with meanings of cloud-enabled or cloud-first applications simultaneously.

Recommended reading: Cloud-Driven Innovations - What Comes Next?

“Cloud-Compatible” describes the state of the application in-between cloud-ready and cloud native. In addition to being cloud-ready, these applications are typically stateless and have externalized configurations, secret management, and good observability. The apps can also scale horizontally.

Two core aspects for taking the cloud-ready applications to a cloud-native state include:

- Deploy and run the containerized microservices onto the cloud infrastructure.

- Analyze whether the cloud-ready application leverages DevOps and Agile principles.

It’s evident that the more clarity, the better the results. However, as discussed during the introduction, there may not always be an opportunity to get the answers. If we can get one, we should ask detailed questions like the ones below.

Containerized Applications

- Which container orchestration engine are you using?

- Is it Kubernetes or compliant with Kubernetes?

- Is it a variant of Kubernetes?

- Is it a heterogeneous container orchestration engine? If so, which one? e.g., Apache Mesos, Docker Swarm.

- If it is a Kubernetes application, is it self-managed using tools like kops, kubeadmin, or likewise?

- What is the source cluster’s actual usage of computing, storage, and network resources?

- What is the technology stack used by the platform where the source cluster is located?

- Is the application stateful or stateless?

- What are the dependencies among applications?

- Is service mesh used? If so, which is the existing service mesh platform?

Configurations

- Is the pattern of externalized configuration implemented for all sources?

- Are the secrets protected and managed well?

DevSecOps

- Is there an existing CI/CD subsystem?

- How are configurations and secrets managed?

- What are the security regulations and compliance terms?

- Which deployment strategy is used?

- What is the design of the container cluster for cybersecurity?

- What are on-premise operational practices for tools, people, and processes?

Observability

- Which monitoring, alerting and auditing subsystem is used?

- What are the log collection and analyzer subsystems?

Mobilization

- What is the accepted downtime window for migration?

- How will running the migration impact normal operations?

- Is the cloud landing zone already set up? By cloud landing zone, is it that at least the baseline cloud setup with a baseline to get started with multi-account architecture is in place? This should include identity and access management, governance, data security, network design, and logging configurations.

However, If there isn’t the opportunity to get clarification on all questions, assume an existing landscape recommends a migration approach with fair and transparent assumptions and provide its estimates accordingly. Moving forward, at the least, this demonstrates a strong migration experience and creates the opportunity to tailor the efforts in the future.

Taking a cloud-native approach

There are different approaches for evolving cloud-ready applications and making them cloud-native. You can create a suitable strategy based on information from the previous section. Below is an overview of the possible complexities for containerized applications running in a particular cloud.

| Container Orchestration | Complexity of Migration | How to Migrate |

| Compatible Kubernetes

|

Simple to Moderate

|

If the CICD process exists, update the delivery/deployment pipeline and release it to the target cluster.

Migrate data through cloud-native tools or third-party partner software. |

| Variant (e.g., OpenShift)

|

Moderate to Complex

|

Use a new CICD pipeline for packaging, delivery, and deployment. Migrate data with appropriate tools based on analysis of the source cluster. Consider the migration of network plugins and Ingress. |

| Heterogenous (e.g., Mesos, Swarm)

|

Most Complex

|

Same approach as above. Use a new CICD pipeline for packaging, delivery, and deployment. Migrate data with appropriate tools based on analysis of the source cluster. Consider the migration of network plugins and Ingress. |

Keep the development process as simple as possible when lacking detailed information or clarification. For estimation purposes, assume that:

- The application cluster on-premises is hosted as a compatible Kubernetes cluster and is compatible for hosting on cloud-managed Kubernetes clusters like AWS EKS.

- Complete DevOps with mature operation capabilities already exist.

- The application landscape consists of both stateless and stateful applications.

With the above core aspects, you should evaluate the migration with simple-to-moderate complexity. Hence the migration methodology, at a high level, can include the following aspects:

- You can deploy the stateless applications using the CICD system. Then you can update the existing CICD to deploy it correctly in the required cloud environment.

- Then, you can migrate the data from stateful applications using cloud-native tools. For example, if the target cloud is AWS, use AWS migration tools like DMS or MGN for the database. Similarly, you should migrate the data from the content server and other storage using AWS native offerings like AWS Data Sync or AWS Transfer family to AWS S3 or AWS EFS, depending on the type of data and the use case. Finally, if needed, you can use the AWS Snowball.

List all other minor assumptions to support this strategy accordingly.

Early Estimation Areas

As mentioned earlier, the clear-cut estimation for the migration strategy will depend on the assessment and clarifications. Therefore, we require a balanced approach of knowns, unknowns, and assumptions for an early end-to-end estimate. However, most of the migrations for transforming the legacy or cloud-native applications to cloud-optimized ones involve the below areas.

Landing Zone

A cloud platform landing zone is an environment for hosting workloads to enable multiple accounts for scale, security governance, networking, and identity. There are various options and archetypes along with accelerators available by cloud providers for aligning to specific scenarios, which you should leverage for estimates.

Cloud Native Resources

The cloud infrastructure’s minimum resources include the managed container cluster, storage services for sessions or caching, content files, databases, integration services, and logging and monitoring services according to the architecture. It also includes configuring and labeling the network and working nodes.

The required infrastructure on the target cloud should preferably be pre-provisioned through code, using cloud-native like AWS Cloud formation for AWS cloud or agnostic tools like Terraform.

Recommended reading: Cloud Sandboxes - How to Train Your Engineers To Go Cloud-Native (whitepaper)

Observability

The monitoring and logging systems can operate the same as those in the existing environment or may be required to complement the native services provided by the cloud.

Security, Risk, and Compliance

Security in the cloud is a shared responsibility, and the vulnerability spectrum is more extensive in the cloud. Therefore, the teams involved need to estimate container security considerations. It includes but is not limited to Image security, Container Privileges, Host Isolation, and Application Layer Sharing.

Other layers to be considered are network, orchestrator, data, runtime, and host when going for cloud deployments. In an existing private data center environment, it may not have applied all security considerations. Prepare an exhaustive list even for early estimations.

Cloud Governance

A holistic cloud governance model enables enterprises to drive the cloud indigenization culture. The core functions include:

- Implement security and safety measures to minimize data vulnerabilities (discussed above).

- Define best practices and drive policies in cloud adoption that align with industry standards.

- Create a continuous improvement plan along with reusable and preconfigured resources.

- Optimize costs and enhance visibility through advanced analytics and reporting.

- Automate and scale processes and infrastructure as and when required. Also, automate metering, monitoring, and chargeback policies and processes.

Teams must design the governance functions described above as either centralized or decentralized based on the business goals. The cloud’s SLIs (Service level indicators) and SLOs(Service Level Objectives) differ from those on-premises. It’s necessary to consider the security, risk, and compliance aspects along with availability, latency, and throughput. If we have less visibility of the business requirements and product owners, adopt a reference cloud-native governance model for estimation purposes.

Delivery and Deployment

Teams must consider the plan, design, and efforts to deploy to lower and higher environments, including at least Dev, QA, stage as preproduction environments, and at least one production environment. If the CICD pipeline exists, the teams can update it or create a new one native to the cloud.

Cutover Plan and Dry Run

All stakeholders should create and prepare a concrete plan and checklist for the possible impact and mitigation. This exercise requires many iterations and is crucial to the integration process. Unfortunately, the work item often gets ignored or underestimated and is a specific call out.

Key Takeaways

Being cloud-ready is not the same as being cloud-native.

The technology world consists of language and vocabulary that can be interpreted differently by different stakeholders. As digital transformational leaders, we should translate the technical terms and the marketing claims back into plain language to make informed decisions for ourselves and our customer organizations.

The initial estimates are critical to any transformation.

An early estimate helps to formulate indigenization strategies, provides a basis to plan and execute engineering and delivery, and serves as a baseline for changes. In addition, estimates presented through a sound, thought-through work items structure and its required approximate person efforts give a competitive edge for overall cost approvals from financial service executives and board members.

GlobalLogic can help.

Whatever stage you are at within your cloud-ready and cloud-native journey, GlobalLogic has the experience in technologies and services to partner with you and enable you to accelerate your journey.

We have also developed blueprints and frameworks meshing the reusable cloud patterns with industry best practices and sound architecture principles:

- Templates to provide the initial estimates

- Frameworks to assess cloud-ready states and enable them to become cloud-native. See Cloudwave.

- Accelerator to make legacy applications a cloud-ready state through microservices and containerization. See MSA.

- Accelerator to modernize legacy data platforms and make them cloud-optimized. See DPA.

- Accelerator through cloud-native infrastructure setup. See OpeNgine.

- Cloud-native Quality Assurance as a platform service. See ScaleQA.

Learn more:

Top Insights

Manchester City Scores Big with GlobalLogic

AI and MLBig Data & AnalyticsCloudDigital TransformationExperience DesignMobilitySecurityMediaTwitter users urged to trigger SARs against energy...

Big Data & AnalyticsDigital TransformationInnovationRetail After COVID-19: How Innovation is Powering the...

Digital TransformationInsightsConsumer and RetailTop Insights Categories

Let’s Work Together

Related Content

Accelerating Digital Transformation with Structured AI Outputs

Enterprises increasingly rely on large language models (LLMs) to derive insights, automate processes, and improve decision-making. However, there are two significant challenges to the use of LLMs: transforming structured and semi-structured data into suitable formats for LLM prompts and converting LLM outputs back into forms that integrate with enterprise systems. OpenAI's recent introduction of structured … Continue reading Cloud Indigenization: A Smart Early Estimate →

Learn More

GenAI in Action: Lessons from Industry Leaders on Driving Real ROI

Generative AI (GenAI) has the potential to transform industries, yet many companies are still struggling to move from experimentation to real business impact. The hype is everywhere, but the real challenge is figuring out where AI can drive measurable value—and how to overcome the barriers to adoption. In the latest episode of Hitachi ActionTalks: GenAI, … Continue reading Cloud Indigenization: A Smart Early Estimate →

Learn More

If You Build Products, You Should Be Using Digital Twins

Digital twin technology is one of the fastest growing concepts of Industry 4.0. In the simplest terms, a digital twin is a virtual replica of a real-world object that is run in a simulation environment to test its performance and efficacy

Learn More

Accelerating Enterprise Value with AI

As many organizations are continuing to navigate the chasm between AI/GenAI pilots and enterprise deployment, Hitachi is already making significant strides. In this article, GlobaLogic discusses the importance of grounding any AI/GenAI initiative in three core principles: 1) thoughtful consideration of enterprise objectives and desired outcomes; 2) the selection and/or development of AI systems that are purpose-built for an organization’s industry, its existing technology, and its data; and 3) an intrinsic commitment to responsible AI. The article will explain how Hitachi is addressing those principles with the Hitachi GenAI Platform-of-Platforms. GlobalLogic has architected this enterprise-grade solution to enable responsible, reliable, and reusable AI that unlocks a high level of operational and technical agility. It's a modular solution that GlobalLogic can use to rapidly build solutions for enterprises across industries as they use AI/GenAI to pursue new revenue streams, greater operational efficiency, and higher workforce productivity.

Learn More

Share this page:

-

-

-

-

URL copied!